- History of texture streaming: Classic, PRT, PRT+ (SFS)

-

-

-

-

Classic Texture Streaming

Let’s start with classic texture streaming, which is the most basic and simple one.

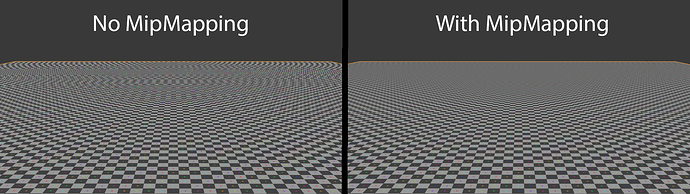

As we’ve talked about “mipmapping”, developers now have gained a new set of assets that is at least a half smaller than the original Mip0.

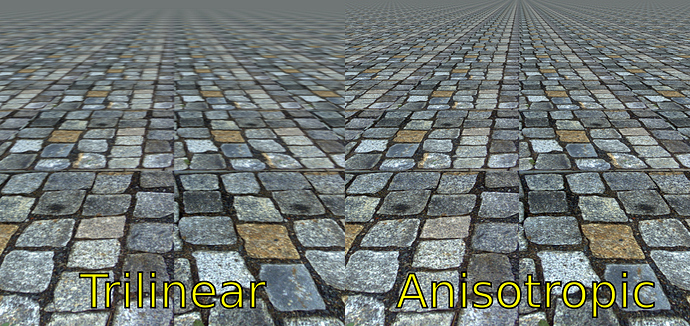

So, for saving the precious memory space, developers start to find out ways to use the high level mip8s (mip8 just for example). Before classic texture streaming, everything in a game level is loaded with mip0. With classic streaming, developers can now use different mip level for different objects, with different ranges or sizes.

Partial Resident Texture or Virtual Texture

PRT is the term used by Unreal Engine, and Virtual Texture is the term used by idTech. But generally they’re the same thing.

As the time moving forward, the mip0 is now larger and larger. We’re seeing 4K and 8K textures now, that can be a huge burden for the memory when loaded in a whole.

So, what about just loading parts of them?

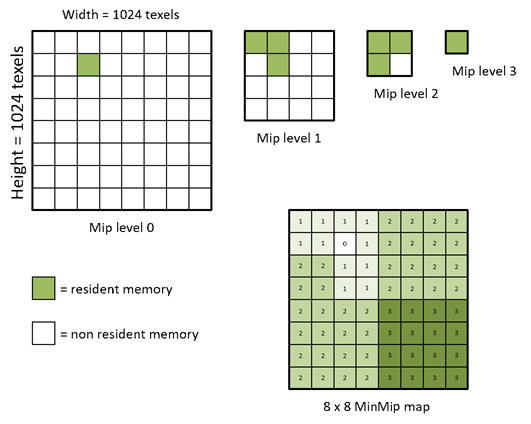

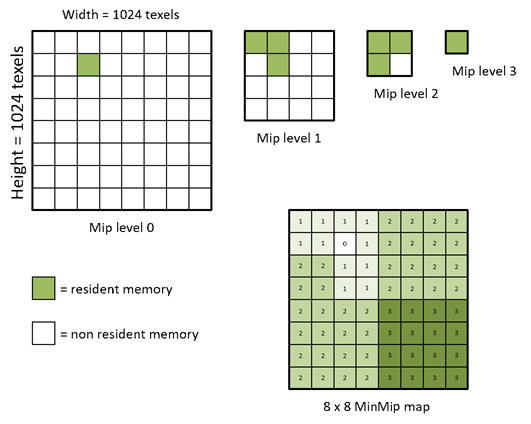

PRT used the same idea of Virtual Memory. We don’t have to load every part of a texture into the memory. We can divide the large texture into small tiles.

By dividing the large texture into a tile array, now we can have more fine grained control over the tiles.

For different parts of the texture, some of them can be a part from Mip0, and some of them can be a part from Mip 3 or so.

The MinMip map above, have shown a 8x8 area, requesting for different level of mips.

In this particular example,

Every tile has the same memory size.

A single tile in Mip1 covers (2^1)^2=4 area size of a Mip0 tile. Thus it’s 4 times less detailed, smaller in general. But still covering the same area size.

Likewise, a Mip2 tile covers (2^2)^2=4^2=16 area size, 16 times less detailed and smaller. But still covering the same area size.

And the Mip3 tile can cover the whole 64 area size single handedly. Awesome right? But it’s extremely poor quality so we can only use it on the most insignificant part.

Before PRT, we need 64 units of tile memory space to cover that 8x8 area.

With PRT, we can now use 1+3+3+1=8 memory space to cover that area. Assuming the mipmap is efficient, that’s a huge save isn’t it?

Well, that’s where the things get tricky: How to make sure the mipmap is efficient?

Before Sampler Feedback, the developers lack the ability to optimize things to the absolutely last drop.

They could only make some guesses about visibility, importance or so, but they lack the direct control on things. It’s like you were riding a bike without your hands on the handle, yes you can still control the weight balance and speed using your muscles, but isn’t that shakey?

PRT+(Sampler Feedback)

Time to save the day! With DirectX 12 Ultimate, developers can now get reports from the sampler, and use that report to minimize artifacts, lag spikes and memory wastes! We can finally put our hands back on the bike’s handle now

Traditional PRT solutions were based on guess,

PRT+(PRT with sampler feedback) is based on hard facts. Because samplers are the real smart end consumers of texture assets, they know what they need (unlike some poor market in other areas of gaming, just kidding LOL). With SF, the streaming engine always only stream needed assets, no waste.

However you do need hardware support for PRT+, you need a modern GPU and SSD at least.

And even PRT+ can be refined and optimised. Here we finally goes to the almighty

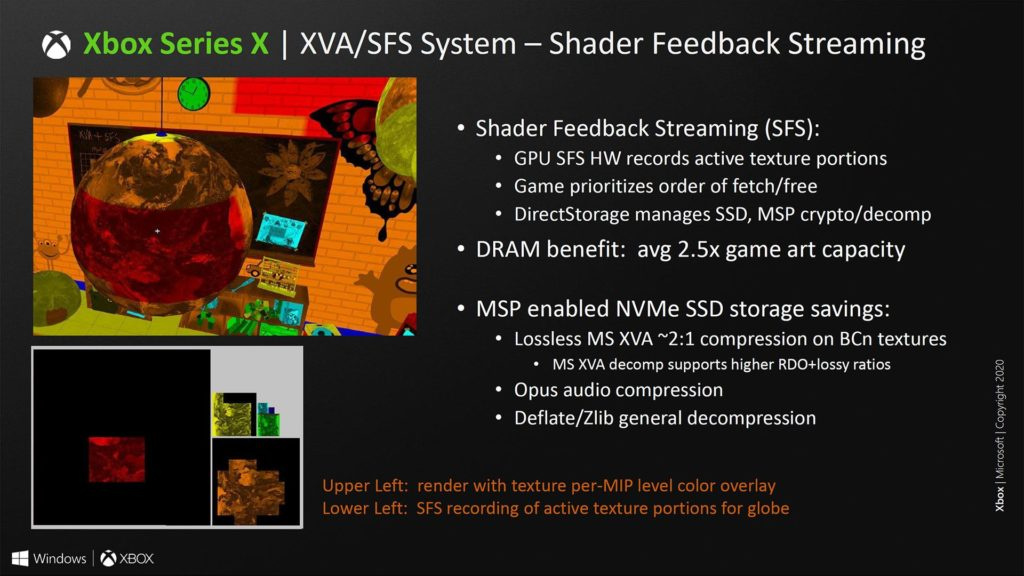

Sampler Feedback Streaming

SFS is based on PRT+, and PRT+ is based on PRT&Sampler Feedback. SFS it’s a complete solution for texture streaming, containing both hardware and software optimizations.

Firstly, Microsoft built caches for the Residency Map and Request Map, and records the asset requests on the fly. The difference between this method and traditional PRT methods is kinda like, previously you have to check the map but now you have a gps.

Secondly, you need a fast SSD to use PRT+ and squeeze everything available in the RAM. You won’t want to use a HDD with PRT+, because when the asset request emerges, it has to be answered fast (within milliseconds!). The SSD on Xbox is now priotized for game asset streaming, to minimize latency to the last bit.

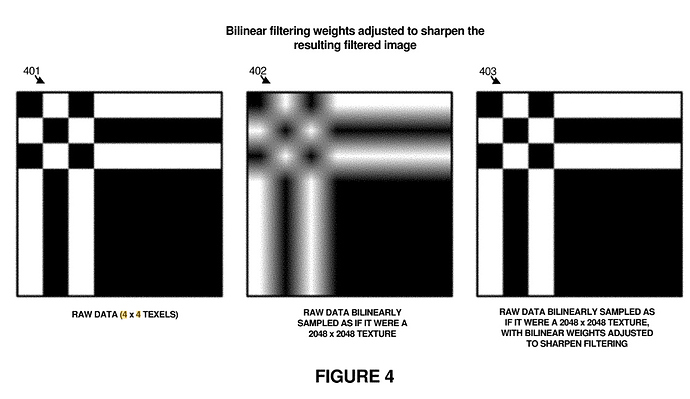

Thirdly, Microsoft implemented a new method for texture filtering and sharpening on hardware. This is used to smooth the loading transition from mip8 to mip4 or mip0…etc. It’s not magic, but it works like magic:

As we have stated, the Sampler knows what it needs. The developer can answer the request of Mip 0 by giving Mip 0.8 on frame 1, Mip 0.4 on frame 2, and eventually Mip 0 on frame 3.

The fraction part is used on texture filtering, so that the filter can work as intended and present the smoothest transition between LOD changes.

It also allows the storage system to have more time to load assets without showing artifacts.

These hardware based optimizations, combined with PRT+, ultimately combined as what we know as Sampler Feedback Streaming. It’s potential is so wild, just like Mesh Shader and Ray Tracing.

Really can’t wait to see a true next gen game with these capabilities enabled!

Sierra 117,signing off

Sierra 117,signing off

Sierra 117,signing off

Sierra 117,signing off